The National Interagency Fire Center (NIFC) has been the keeper of U.S. wildfire data for decades, tracking both the number of wildfires and acreage burned all the way back to 1926. After making the entire dataset public for decades, now, in a blatant act of cherry picking, NIFC “disappeared” a portion of it, and only show data from 1983. You can see it here.

Fortunately, the Internet never forgets, and the entire dataset is preserved on the Internet Wayback Machine. Data prior to 1983 show U.S. wildfires were far worse 100 years ago, both in frequency and total acreage burned, than they are now, 100 years of modest warming later.

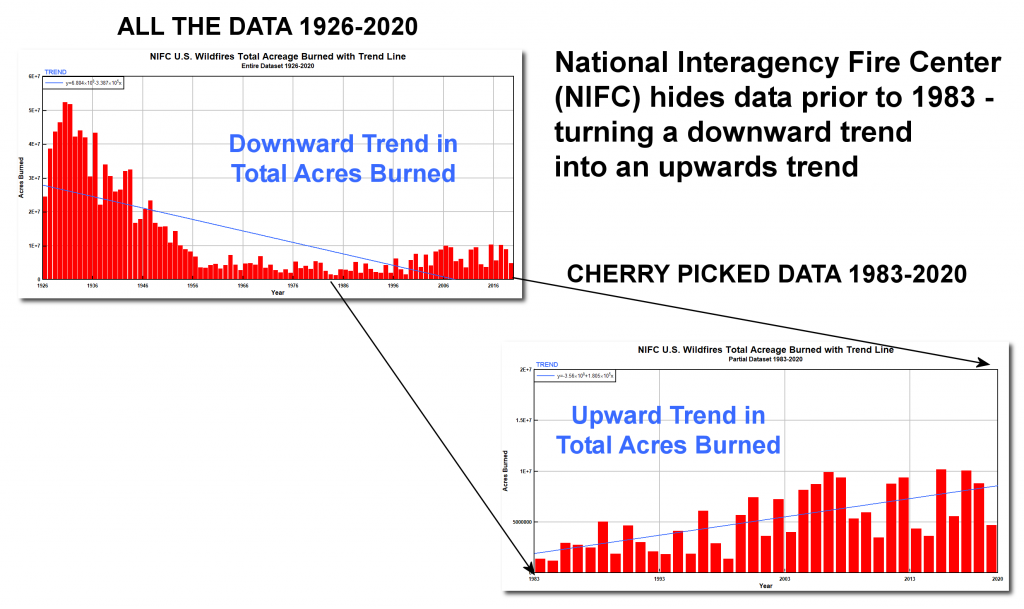

By disappearing all data prior to 1983, which just happens to be the lowest point in the data set for the number of fires, NIFC data now show a positive slope of worsening wildfire aligning with increased global temperature. This truncated data set is perfect for claiming “climate change is making wildfire worse,” but flawed because it lacks the context of the full data set. See figure 1 below for a before-and-after comparison of what the NIFC data look like when you plot it.

The full data set shows wildfires were far worse in the past.

In June 2011, when the data set was first made publicly available by the NIFC, the agency said,

“Figures prior to 1983 may be revised as NICC verifies historical data.”

In December 2017, I published an article, titled “Is climate change REALLY the culprit causing California’s wildfires?,” pointing out the federal government’s own data showed wildfires had declined significantly since the early 1900s, which undermined claims being made by the media that climate change was making wildfires more frequent and severe. Curiously, between January 14 and March 7 of 2018, shortly after I published my article, NIFC added a new caveat on its data page stating:

The National Interagency Coordination Center at NIFC compiles annual wildland fire statistics for federal and state agencies. This information is provided through Situation Reports, which have been in use for several decades. Prior to 1983, sources of these figures are not known, or cannot be confirmed, and were not derived from the current situation reporting process. As a result the figures prior to 1983 should not be compared to later data.

With the Biden administration now in control of NIFC, the agency now says,

“Prior to 1983, the federal wildland fire agencies did not track official wildfire data using current reporting processes. As a result, there is no official data prior to 1983 posted on this site.”

This attempt to rewrite official United States fire history for political reasons is both wrong and unscientific. NIFC never previously expressed concern its historical data might be invalid, or shouldn’t be used. NIFC’s data have been relied upon by peer reviewed research papers and news outlets in the United States for decades. Without this data, there is no historical scale for the severity of wildfires, nor is there a method to compare the number of wildfires in the past with the numbers today.

Wildfire data are fairly simple to record and compile: count the number of fires and the number of acres burned. NIFC’s revision of wildfire history is is essentially labeling every firefighter, every fire captain, every forester, and every smoke jumper who has fought wildfires for decades as being untrustworthy in their assessment and measurement of this critical, yet very simple fire data.

The reason for NFIC erasing wildfire data before 1983 is not transparent at all. NIFC cites no study, provides no scientifically sound methodological reason to not trust the historic data that previously the agency publicized and referenced. Indeed, NIFC provides no rationale at all for removing the historical data or justifying any claim that it was flawed or incorrect.

If a change in the way scientists or bureaucrats track and calculate data over time legitimately justifies throwing out or dismissing every bit of evidence gathered before contemporary processes were followed, there would be no justification for citing past data on temperatures, floods, droughts, hurricanes, or demographics and economic data.

The way all of these and other “official” records have been recorded has changed dramatically over time. Even the way basic temperature is recorded is constantly evolving, from changes in where temperatures are recorded, to how they have been recorded. Changes in temperature recording include starting from a few land-based measuring stations and ocean-going ship measurements, evolving to weather balloons, then satellites, and now geostationary ocean buoys. If simply changing the way a class of data is recorded justifies jettisoning all historic data sets, then no one can say with certainty that temperatures have changed over time or that a human fingerprint of warming has been detected.

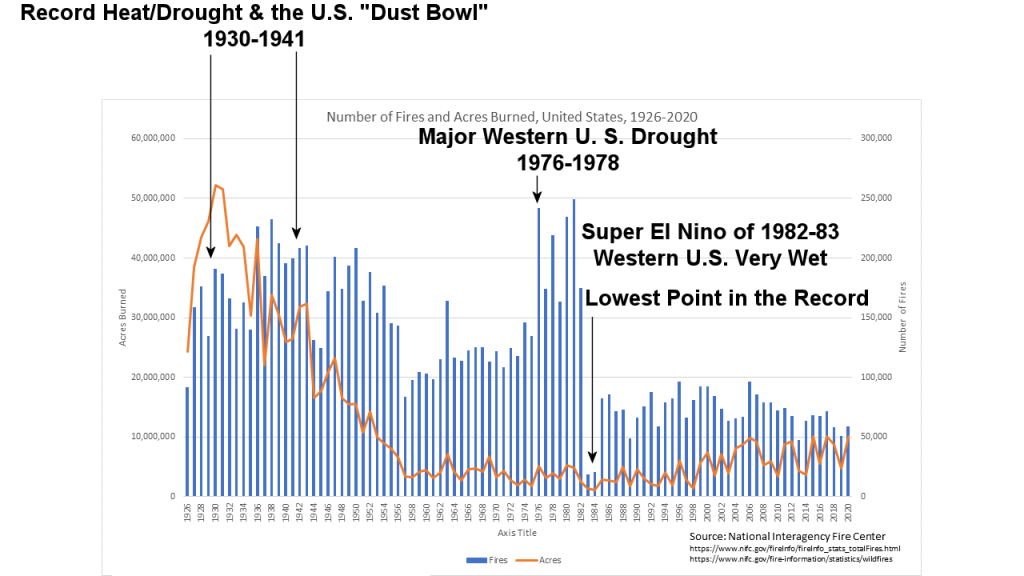

Plotting the entire NIFC data set (before it was partially disappeared) demonstrates that wildfire and weather patterns have been inextricably linked for decades. Note Figure 2, below, combining the number of fires and number of acres burned. See the annotations that I have added.

The NIFC decision to declare data prior to 1983 “unreliable” and removing it is not just hiding important fire history, but cherry picking a data starting point that is the lowest in the entire record to ensure that an upwards trend exists from that point.

The definition of cherry picking is:

Cherry picking, suppressing evidence, or the fallacy of incomplete evidence is the act of pointing to individual cases or data that seem to confirm a particular position while ignoring a significant portion of related and similar cases or data that may contradict that position.

It seems NIFC has caved to political pressure to disappear inconvenient wildfire data. This action is unscientific, dishonest, and possibly fraudulent. NIFC is no longer trustworthy as a source of reliable information on wildfires.

I disagree with anyone trying to link acres burned directly with climate change. The acres burned depend more on forest management, or lack of management, and how well firefighters do their jobs.

The global average temperature gradually getting slightly warmer over the past 100 years does not cause people to accidentally start (or deliberately start) forest fires.

Roughly 90% of the fires are said to be caused by humans, or related to humans (downed power lines).

What doe that have to do with a little warming in 100 years?

During the fire season, California was ALWAYS dry enough to have forest fires. A few tenths of a degree warmer can’t make dry brush even dryer ! A friend who lives in Santa Rosa, CA suffered during the last big fire, because CA does not manage it’s forests, and utility companies don’t trim their trees near transmission lines often enough. Forests that are not managed are vulnerable to massive fires that are very hard to put out. … As her CA governor blamed “climate change”.

Most important is the chart.

I looked into the details of the acres burned chart a few years ago. Why so many acres burned before World War II?

The reason for that, based on the data I found, was a huge number of acres burned in the southeastern states.

Mainly from prescribed fires to clear dead brush and trees, by the Civilian Conservation Corp., when they were not busy planting trees, etc. Delete the southeasters fire zone with all those prescribed fires, look only at the rest of the US, and the chart would look much different.

Making a comment, like the one I just made, got me permanently banned from making future comments at the climate skeptic website: realclimatescience.com

Richard Greene

Bingham Farms, Michigan

http://www.elOnionBloggle.Blogspot.com

Thank you Richard…it is telling that you were banned after making statements of “fact” as they never want to honestly debate/discuss their own “facts”…

I disagree Richard. Mother Nature is angry and is spontaneously combusting to show her displeasure. At least that’s what AlGore has been telling us for 30 years.

WATTS IS THE MAN OF THE HOUR FOR HAVING BEEN INVOLVED WITH REPORTING AS LONG AS HE HAS BEEN. THE HEARTLAND INSTITUTE SHOULD BE PROUD.

I found it interesting that there are several plant species that require FIRE to prepare the seeds to germinate. Including the Giant and Noble Sequoia. Common sense tells me plants would never have adapted to need this if forest fires were no common long before man existed.

There is a good reason why the fire center deleted those data from its web site. They are bad data. Before about 1950 to 1955 (depending on the state), the Forest Service counted prescribed fires as wildfires. This greatly inflated the acres, especially since forest land owners in many southern states burned their forests every four or five years.

Data showing acres burned before 1955 are not comparable with data after 1955. I have covered this issue in several papers and articles, most recently https://ti.org/pdf/APB18.pdf.

“Bad data”? It was used in several peer reviewed papers, and it was accepted for years. It’s only when people started pointing to how there were many more fires before why did it suddenly become “bad data”?

“bad data” is in some cases the linguistic correspondence for cherry picking

Maybe there are those kinds of biases in the data, but they would still show that forest management, including prescribed burns and suppression, is the main driver of the fire area signal. Even according to your interpretation, total area burned in 1930 was five times as large as wildfire area now.

Compare historical photos of the West to current ones and notice that there are a lot more trees now than there used to be.

https://artsourceinternational.com/wp-content/uploads/2018/04/P-1259-FLATIRONS-1.jpg

https://hashtagcoloradolife.com/wp-content/uploads/2020/07/chautauqua-park.png

Any reasonable scientist would start with the assumption that modern fire suppression is an enormous factor, while climate is a hard-to-detect factor.

Um, a fire is a fire, regardless of how it started, is it not? That leads directly to the conclusion that there were more fires 90-100 years ago, when the climate was a fraction of a degree cooler than it is now. The whole point of saying this, and this whole discussion, is that after datasets were deleted from public view, the post 1983 data was used to make the claim by government, academia and climate science centers that fires have increased due to GLOBAL WARMING. This is easily determined to be false, when the entire dataset is included. Thank you for posting your statement above. It provides valuable insight into how climate data is being purposely manipulated, in order to fit the preconceived ideas of the climate science community. I just wonder, how can you and climate science miss such a simple fact of a fire being a fire? If you are in fact knowingly misrepresenting climate data, rather than simply misinterpreting climate data, how could you do that? Why would you do that?